Anthropic Didn’t Just Raise Prices. It Downgraded AI Agents.

This Was Not Just a Pricing Change

When Anthropic cut off Claude subscription usage for third-party harnesses like OpenClaw, it did not just change billing. It changed the shape of AI work.

For casual users, this may look like a pricing dispute. For people actually running serious AI agent workflows, it is much bigger than that. A whole class of high-agency systems just got pushed from practical daily operator back into expensive occasional assistant.

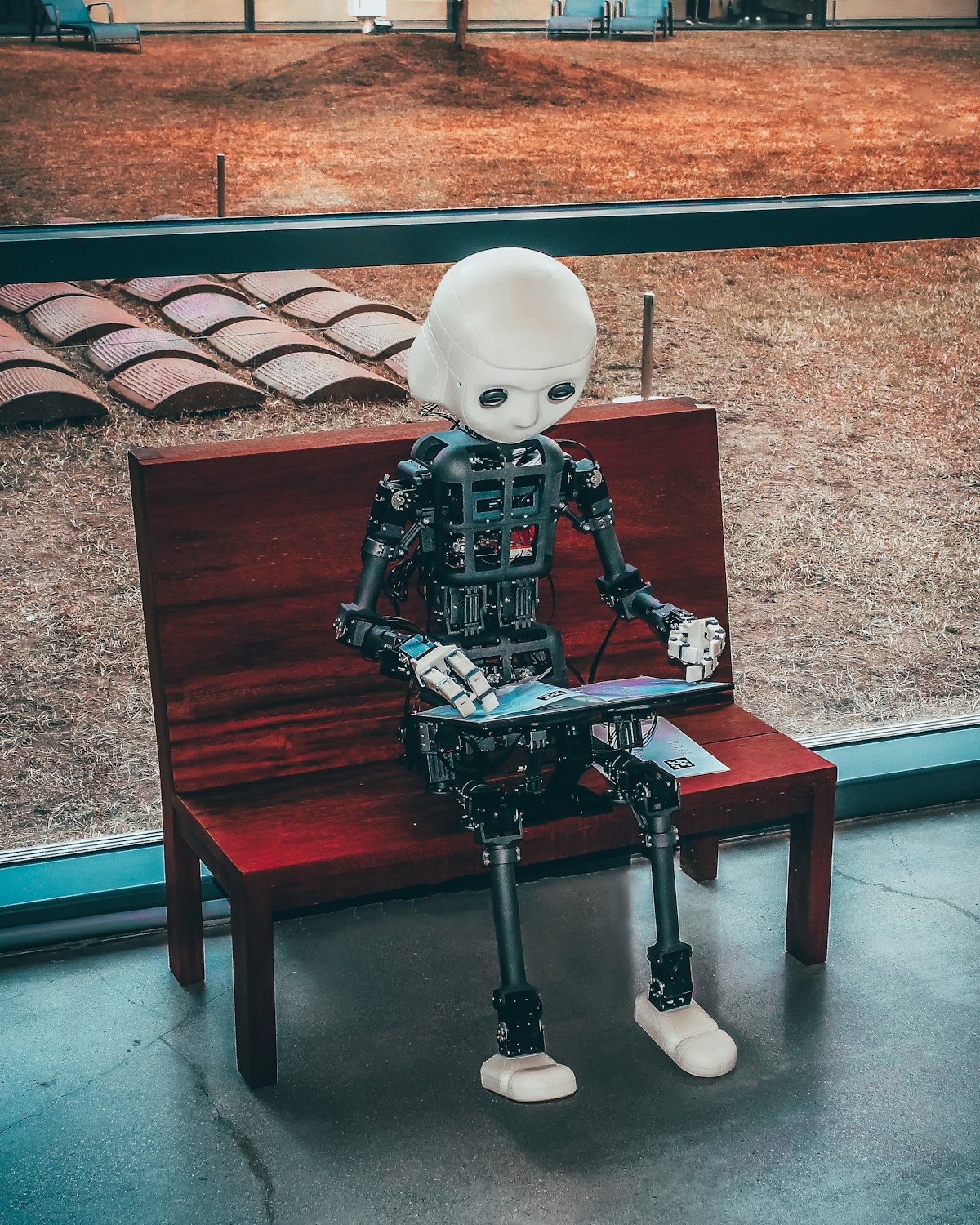

From Executive to Intern

Here is the blunt version: moving from a top-tier Claude setup inside OpenClaw to GPT-5.4 in the same environment does not feel like swapping one excellent employee for another.

It feels like downgrading a chief executive into an intern.

That is not a knock on OpenAI. GPT-5.4 is strong in many contexts. But in day-to-day agent operation — sustained context, judgment, execution quality, and the ability to behave like a real operator instead of a clever autocomplete engine — the gap is obvious.

What Actually Changed

According to TechCrunch, Anthropic told customers that starting April 4, subscribers could no longer use Claude subscription limits for third-party harnesses including OpenClaw. Instead, they would need separate pay-as-you-go billing for that usage.

Anthropic may have valid internal reasons for that decision. But user experience does not care why the downgrade happened. Users care that the workflow they built around suddenly got more expensive, less predictable, and less viable.

The Bigger Lesson: Dependence Is Fragility

This moment exposed a larger truth about AI infrastructure: if your best workflows depend on one vendor keeping its policy friendly, you do not really own your AI stack.

You are renting capability from a company whose incentives can change overnight.

That is why Andrej Karpathy’s recent "file over app" and bring-your-own-AI framing matters so much. The future is not just smarter models. It is agent systems where your memory is portable, your workflows are inspectable, and your model layer can be swapped without rebuilding the whole stack.

Why Hugging Face and Open Models Matter More Now

Dave Morin’s reaction captured the opportunity clearly: liberate OpenClaw with open or local models.

Open models are not yet universally better than Claude Opus 4.6. But this is how market transitions happen: the incumbent proves the value, then makes the value painful to access, and the ecosystem races to build the open alternative.

Hugging Face matters because it lowers the cost of portability. It gives builders a way to source, test, host, and swap models without tying their entire agent strategy to one company’s pricing or policy decisions.

The Bottom Line

This was not just a billing update. It was a reminder that if your AI agent can be nerfed by someone else’s policy email, it was never fully yours.

Anthropic built one of the best model experiences in the market. But by making third-party agent usage materially harder, it may have accelerated the exact future that weakens its control: open, portable, user-owned agent systems.

And once you have felt the difference between an AI executive and an AI intern, you do not forget it.

← More Bits